How to install Apache Superset on a GKE Kubernetes Cluster

Share

Interests

Posted in these interests:

In this guide, I’m going to show you how to install Apache Superset in your Kubernetes cluster (on GKE). I’m using Cloud SQL (Postgres) for the database backend, Memorystore (redis), and BigQuery as the data source. However, even if you’re using some different components this guide should still provide enough guidance to get you started with Superset on Kubernetes.

There’s a Github repository that accompanies this guide. If you’re already a Kubernetes master, you can probably just head over to the repository and get the configs. But the purpose of this guide is to provide instructions as well as an explanation of the configs.

With that said, if you’re ready to install Superset on your Kubernetes cluster, I recommend reading through this guide before taking action. Then, clone the Github repo and start working through the instructions when you’re ready.

What is Superset?

Superset is an open-source business intelligence tool. In short, it’s a web application that lets you connect to various data sources to query and visualize data.

What is GKE?

GKE stands for Google Kubernetes Engine. It is Google Cloud Platform’s (GCP) hosted Kubernetes service.

1 – Using Helm

I’m going to start by mentioning Helm because it’s the simplest option, and if you’re happy to use Helm and this chart works for you, then you should use it!

Install helm

Head here to learn how to install helm. Once installed, you need to initialize helm in your cluster.

helm initInstall superset using helm

Head here for details on the superset helm chart. I’ll provide an example of the basic installation, but there are many more configuration options to set if desired.

helm install stable/supersetHopefully this works swimmingly for you. However, I’ve found that this chart is not quite what I need. Because of the way the Google Cloud SDK authenticates, I need to mount my service account key file secret as a volume on the Superset pod, and it didn’t seem clear how to accomplish this. I’m also slightly biased against using helm, so I didn’t keep digging.

With that said, the remainder of this guide will show you how to install superset manually — that is, by building the configs by hand.

2 – Setup for a manual Superset installation

Before we begin, I want to discuss the setup. Configuring each of these tools is outside the scope of this guide, but I will explain each component and provide some useful tips.

Cloud SQL

I’m using a Cloud SQL Postgres instance. Setting this up is pretty simple, but make sure you enable Private IP on your instance.

Whether you’re using Cloud SQL or something else, you’ll need to create a superset database, and a user with all privileges granted to this database.

Memorystore

Configuring a Memorystore redis instance on GCP is pretty easy. You can also run a redis deployment in your GKE cluster with very little work. Either solution is fine.

GKE Cluster

We’re going to use a Kubernetes cluster running on GKE. This process is a little more involved, and again, I’m not going to go into detail here.

I will say that you need make sure your cluster is a VPC Native cluster so your cluster can connect to your DB using the private IP.

Local environment

You need to install the Google Cloud SDK on your local computer if you haven’t already. Then authenticate with your GKE cluster:

gcloud container clusters get-credentials <cluster name> --zone <zone> --project <project id>3 – The superset config

The following is our superset config. In short, it configures our database backend connection, redis connection, and celery.

If you’re using Cloud SQL (Postgres), like we configured previously, then you should need to modify this file at all. If you’re using another database backend, you’ll have to modify the config to build the correct sql alchemy database URI.

import os

def get_env_variable(var_name, default=None):

"""Get the environment variable or raise exception.

Args:

var_name (str): the name of the environment variable to look up

default (str): the default value if no env is found

"""

try:

return os.environ[var_name]

except KeyError:

if default is not None:

return default

raise RuntimeError(

'The environment variable {} was missing, abort...'

.format(var_name)

)

def get_secret(secret_name, default=None):

"""Get secrets mounted by kubernetes.

Args:

secret_name (str): the name of the secret, corresponds to the filename

default (str): the default value if no secret is found

"""

secret = None

try:

with open('/secrets/{0}'.format(secret_name), 'r') as secret_file:

secret = secret_file.read().strip()

except (IOError, FileNotFoundError):

pass

if secret is None:

if default is None:

raise RuntimeError(

'Missing a required secret: {0}.'.format(secret_name)

)

secret = default

return secret

# Postgres

POSTGRES_USER = get_secret('database/username')

POSTGRES_PASSWORD = get_secret('database/password')

POSTGRES_HOST = get_env_variable('DB_HOST')

POSTGRES_PORT = get_env_variable('DB_PORT', 5432)

POSTGRES_DB = get_env_variable('DB_NAME')

SQLALCHEMY_DATABASE_URI = 'postgresql://{0}:{1}@{2}:{3}/{4}'.format(

POSTGRES_USER,

POSTGRES_PASSWORD,

POSTGRES_HOST,

POSTGRES_PORT,

POSTGRES_DB,

)

# Redis

REDIS_HOST = get_env_variable('REDIS_HOST')

REDIS_PORT = get_env_variable('REDIS_PORT', 6379)

# Celery

class CeleryConfig:

BROKER_URL = 'redis://{0}:{1}/0'.format(REDIS_HOST, REDIS_PORT)

CELERY_IMPORTS = ('superset.sql_lab',)

CELERY_RESULT_BACKEND = 'redis://{0}:{1}/1'.format(REDIS_HOST, REDIS_PORT)

CELERY_ANNOTATIONS = {'tasks.add': {'rate_limit': '10/s'}}

CELERY_TASK_PROTOCOL = 1

CELERY_CONFIG = CeleryConfig

4 – Create a configmap for the superset config

We’re going to create a configmap named superset-config. This is how we’ll add our superset-config.py file to the superset pods.

I’m going to create the configmap object directly using kubectl.

kubectl create configmap superset-config --from-file supersetYou can confirm with:

kubectl get configmap5 – Add secrets to the cluster

We’ll need to add our database secrets and our google cloud service account key secret.

If you look in the secrets directory, you’ll see a directory structure like this:

secrets

├── database

│ └── password

│ └── username

└── gcloud

└── google-cloud-key.jsonWe’re going to create two secrets: database and gcloud. You’ll need to edit the files in each of these directories so they include the applicable secrets.

Then create the secrets using kubectl.

kubectl create secret generic database --from-file=secrets/database

kubectl create secret generic gcloud --from-file=secrets/gcloudNote: This directory is here simply to make it easy for you to create the appropriate secrets. You would never check these into any repository.

You can confirm the secrets exist with:

kubectl get secret6 – The superset deployment

Check out the file called superset-deployment.yaml. This deployment defines and manages our superset pod:

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: superset-deployment

namespace: default

spec:

selector:

matchLabels:

app: superset

template:

metadata:

labels:

app: superset

spec:

containers:

- env:

- name: DB_HOST

value: 10.10.10.10

- name: REDIS_HOST

value: redis

- name: DB_NAME

value: superset

- name: GOOGLE_APPLICATION_CREDENTIALS

value: /secrets/gcloud/google-cloud-key.json

- name: PYTHONPATH

value: "${PYTHONPATH}:/home/superset/superset/"

image: amancevice/superset:latest

name: superset

ports:

- containerPort: 8088

volumeMounts:

- mountPath: /secrets/database

name: database

readOnly: true

- mountPath: /secrets/gcloud

name: gcloud

readOnly: true

- mountPath: /home/superset/superset

name: superset-config

volumes:

- name: database

secret:

secretName: database

- name: gcloud

secret:

secretName: gcloud

- name: superset-config

configMap:

name: superset-configThe only things you’ll need to configure are the DB_HOST and REDIS_HOST environment variables, so update these values with the IP or hostname of your instances.

7 – The superset service

This service will send incoming traffic on port 8088 to the superset pods.

apiVersion: v1

kind: Service

metadata:

name: superset

labels:

app: superset

spec:

type: NodePort

ports:

- name: http

port: 8088

targetPort: 8088

selector:

app: superset8 – Apply the configs

Once you’ve added the configmap and secrets, and you’ve customized any configs as desired, you’re ready to apply the configs.

kubectl apply --recursive -f kubernetes/It will take a little bit of time for the image to pull and for the containers to start up, but you can check your progress with:

kubectl get pod | grep superset9 – Create the admin user

Now let’s create an admin user.

SUPERSET_POD=$(kubectl get pod | grep superset | awk '{print $1}')

kubectl exec -it $SUPERSET_POD -- fabmanager create-admin --app supersetYou’ll be given a series of prompts to complete. Make sure to save your password!

10 – Forward port 8088 and log in

Since our service isn’t exposed to the world, we’ll need to forward port 8088 to our superset service.

kubectl port-forward service/superset 8088Now we can open a browser and go to: http://localhost:8088

You should be able to log in to superset using the admin username and password you set previously.

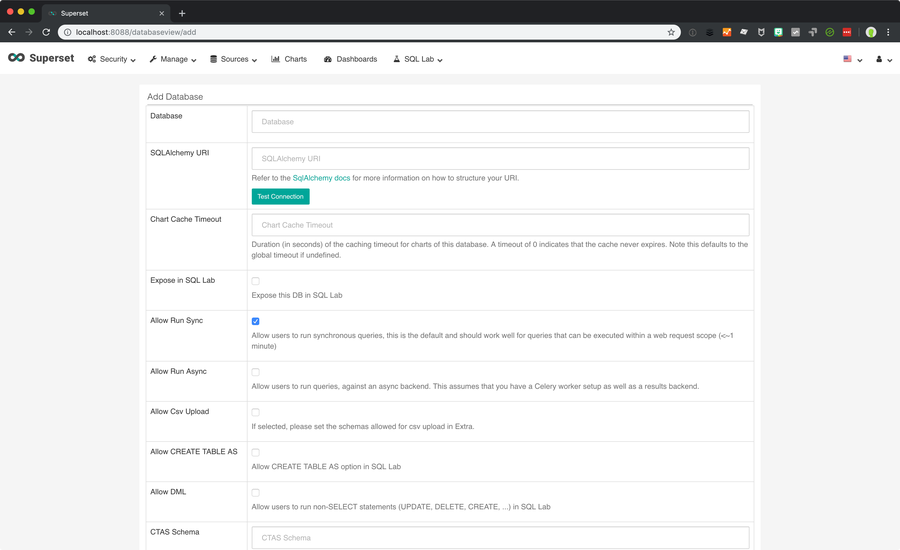

11 – Setting up a BigQuery data source

Now you’re ready to add a data source. If you’re using BigQuery and you mounted your service account key as I’ve show in this guide, you’re very close.

In Superset, click on Sources > Databases.

Then click the + icon that says Add a new record.

Now fill out the details for your database. The SQLAlchemy URI structure for bigquery is simple:

bigquery://<project id>12 – Next steps

Now you’ll need to configure your tables and start building charts and dashboards. Refer to the Superset docs for this part.

If you’ve gotten to this point, and you’re pretty sure that Superset is going to be a permanent part of your stack, you may want to set up DNS and access this using a nicer hostname and without having to set up port forwarding every time. If there’s enough interest, I can update this guide with instructions!

Grafana and Prometheus can be powerful data analysis tools. Check out this guide to install both Grafana and Prometheus on your Kubernetes cluster.